Since the early days of quantum computing, progress in the field has been measured by the increasing numbers of qubits — the benchmark companies like Google and IBM use to showcase their dominance in quantum development. Yet this metric doesn’t tell the whole story, writes Professor Ashley Montanaro, co-founder and CEO of Phasecraft,

As we’ve learned more about the power of quantum computing, gate fidelity – how well we can manipulate the qubits to undertake computations – has emerged as an equally important metric. Gate fidelity isn’t just a way to evaluate today’s Noisy Intermediate-Scale Quantum (NISQ) computers, but also for finally paving the way to practical quantum advantage - when quantum computers outperform classical ones for useful real-world applications.

The qubit obsession

This emphasis on qubits made sense in quantum’s early days. It’s an easily quantifiable metric, providing a way to gauge and compare processors, much like how classical computers were compared using clock speeds, or smartphone camera makers focusing on megapixels. Creating a stable qubit was a significant achievement, whilst precision of operations was less of a concern.

Increasing the number of qubits was a natural next step. Quantum computers below 40-50 qubits can easily be simulated by their classical counterparts, so it was essential to get beyond this barrier to achieve a meaningful quantum advantage.

But now we’re past this stage, gate fidelity is emerging as a more relevant metric. As qubit numbers have increased, so too has the potential to run meaningful algorithms on quantum hardware. With this comes an increased chance of errors in the computations, due to noise and decoherence. It is therefore more important to perform quantum operations accurately.

The case for gate fidelity

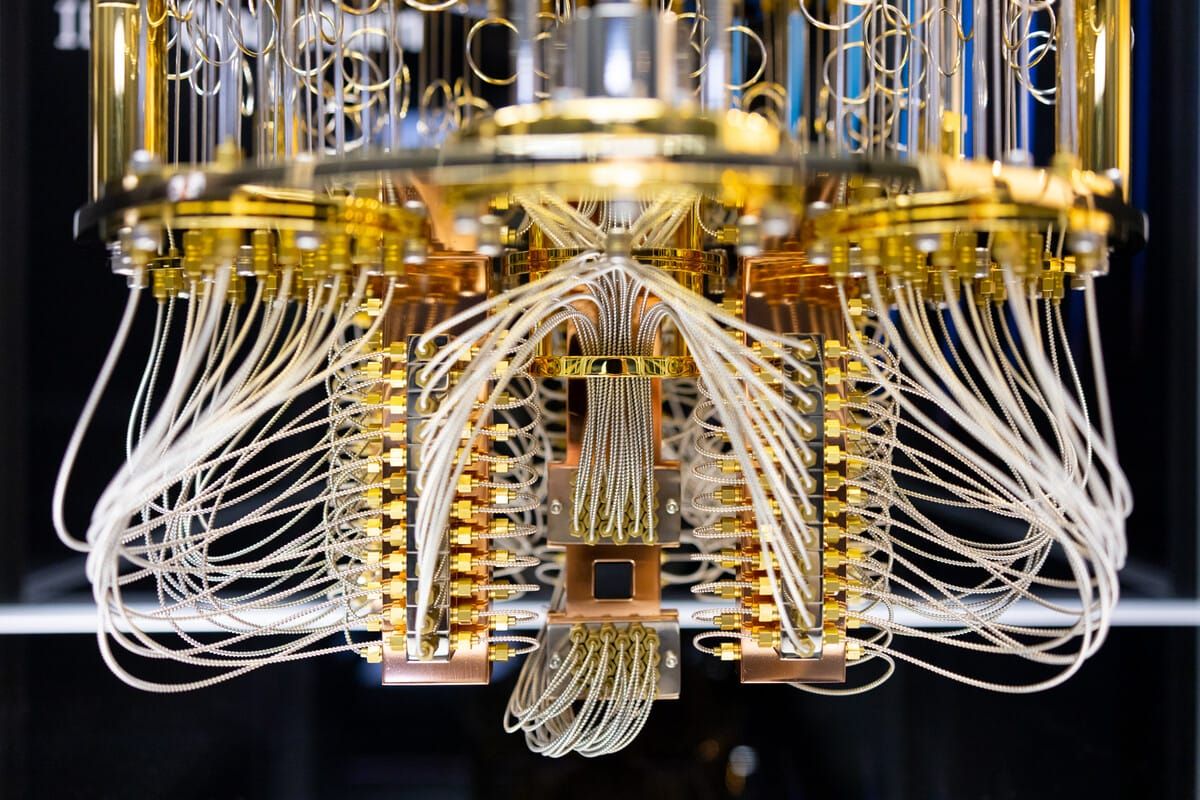

Quantum computers work by changing the state of qubits through quantum operations (gates), with quantum algorithms determining the nature of gates and the order in which they must be performed (i.e. the quantum circuit) to achieve the desired outcome.

Gate fidelity measures how accurately a quantum gate does its job: specifically a measure of the difference between the ideal, theoretical operation of a gate (how the gate should be working) versus its actual operation (how it actually performed). This distance between ideal and actual operation is a percentage — with 100% fidelity meaning the gate performed with no errors.

Any quantum circuit, and algorithm, comprises multiple gates. These gates are often executed sequentially in an algorithm or circuit, where the number of consecutive gates is termed the circuit depth, and more complex calculations require deeper circuits. With errors on consecutive gates accumulating, gate fidelity translates into a measure of how many gates you can run on a quantum computer before the computation fails. This sets a limit on the maximum complexity of any algorithm that can run successfully on the hardware.

The engineering dilemma

Achieving high gate fidelity is easier said than done. Implementing a gate can introduce a range of errors and noise, whilst the type of gate adds to this complexity. Single-qubit gates require precise control to avoid errors, whilst 2-qubit entangling gates, which are the key element that makes the computation quantum, require a high level of control of the interactions between two qubits simultaneously. This leads to higher error rates and increased sensitivity to environmental factors.

Errors exist in classical computation too, but correction strategies ensure the average of bits will return the correct value. Quantum error correction algorithms have been developed, but to be effective they need to make use of large numbers of qubits to store additional information to detect and correct errors. Estimates vary, but around 1,000 physical qubits will be required for each logical qubit.

Despite the rapid growth in qubit numbers we have witnessed in the recent past, reaching the threshold for “fault-tolerant quantum computation,” where we can run error-corrected codes on quantum computers, is still far from reality.

For now, the challenge is to make the most of the existing, imperfect quantum computers and develop ways to use them to find solutions to practical problems. Gate fidelity plays a critical role in binding the size and complexity of algorithms that can be run.

Solving this dilemma

Hardware companies across the world have been working on these challenges by simplifying architectures and increasing control. Companies including Quantinuum and Oxford Ionics, have passed the so-called “three nines” threshold for two-qubit gate fidelity. With gate fidelities greater than 99.9%, it is now possible to implement around 1,000 entangling operations before errors compromise the final result, opening the possibility of exciting applications soon.

At Phasecraft, we’ve taken a software-led approach and demonstrated a way of drastically cutting the number of quantum gates needed to run simulations, by a factor of more than a million in some cases. We’ve reduced the complexity of simulating the time-evolution of a quantum materials system by 400,000x, run the largest-ever simulation of a materials system on actual hardware by 10x, and proved for the first time that near-term quantum optimization algorithms outperformed classical algorithms.

This complementary, hardware-software mix is paving the way towards practical quantum computers. Indeed, IBM’s road map, having historically centred on size, is now focused on getting a smaller number of qubits to work more efficiently to unlock quantum’s true, real-world advantage.

Fidelity paving the way to practical advantage

These breakthroughs are just two examples of why focusing on gate fidelity is the future of quantum innovation. There is no point in increasing qubits if improvements in error rates are not made in tandem. The priority now is to develop high-fidelity gates fast enough to perform logical operations in a realistic amount of time and to fabricate more and better physical qubits to build error-corrected logical qubits.

The good news is that several of today’s platforms are progressing towards meeting these requirements, which are necessary for quantum computing to have a tangible impact on the world, from discovering more efficient battery materials or improving energy grid efficiency. Focusing on gate performance could accelerate this development within a few years, rather than a decade. We need to shift from focusing on system size, towards what we can and should be doing with these systems today.