Troy Hunt’s HaveIBeenPwned site has become the go-to repository of leaked usernames and passwords. The site hosts over 11 billion leaked accounts and 600+ million compromised passwords – the database also underpins tools like Firefox Monitor that alert users if their details have been exposed in a data breach.

The Australian founder of the site has long been an advocate of the cloud – noting some years back that a nicely optimised cloud hosting setup meant he could support over 141 million monthly queries for ~A$0.2 per day; a hugely cost-effective approach built primarily on Azure Functions and Cloudflare Workers.

Microsoft provides storage for all of the data that he has collated. Cloudflare reduces requests to the origin and avoids egress bandwidth from Azure as well by caching most of it at the edge on its servers.

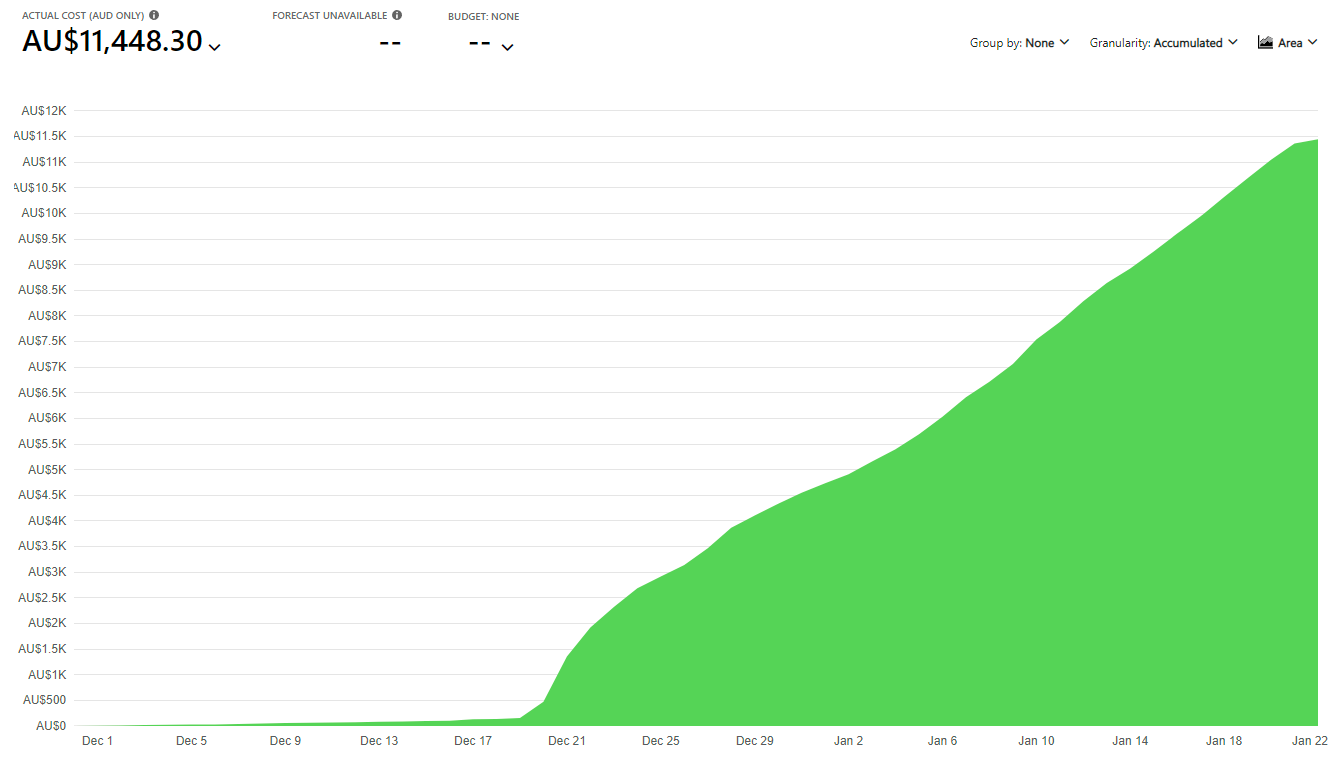

Nobody is immune to cloud bill shock however and in a blog post this week the information security author and instructor detailed how he had been unexpectedly stung by an A$11,000 Azure bill. His findings deserve wider sharing and are an important reminder of how easier it is to get caught napping on your cloud setup.

Stepping back: many adopters have been stung on their cloud bills. Configuration can require more manual finessing than many give credit for and some simple oversights have caught many users out, across most cloud platforms. (The UK’s Home Office is one public sector entity that’s been frank about its lessons on this front, in a 2019 blog detailing how it cut its cloud bill by 40% after several bill shocks, in part by shifting temporary jobs, which made up 30% of the compute powering its non-production containerised clusters, to spot instances.)

Often in a cloud bill shock incident – when you face a huge and unexpected charge from your cloud provider – it can be hard to understand the precise cause. In a handy blog, Troy detailed his situation here.

HaveIBeenPwned Azure bill shock: what happened?

A synopsis: HaveIBeenPwned was set up to offer downloadable hashes in Pwned Passwords. These were cached at the Cloudflare edge node and optimised to ensure bandwidth from origin service was negligible.

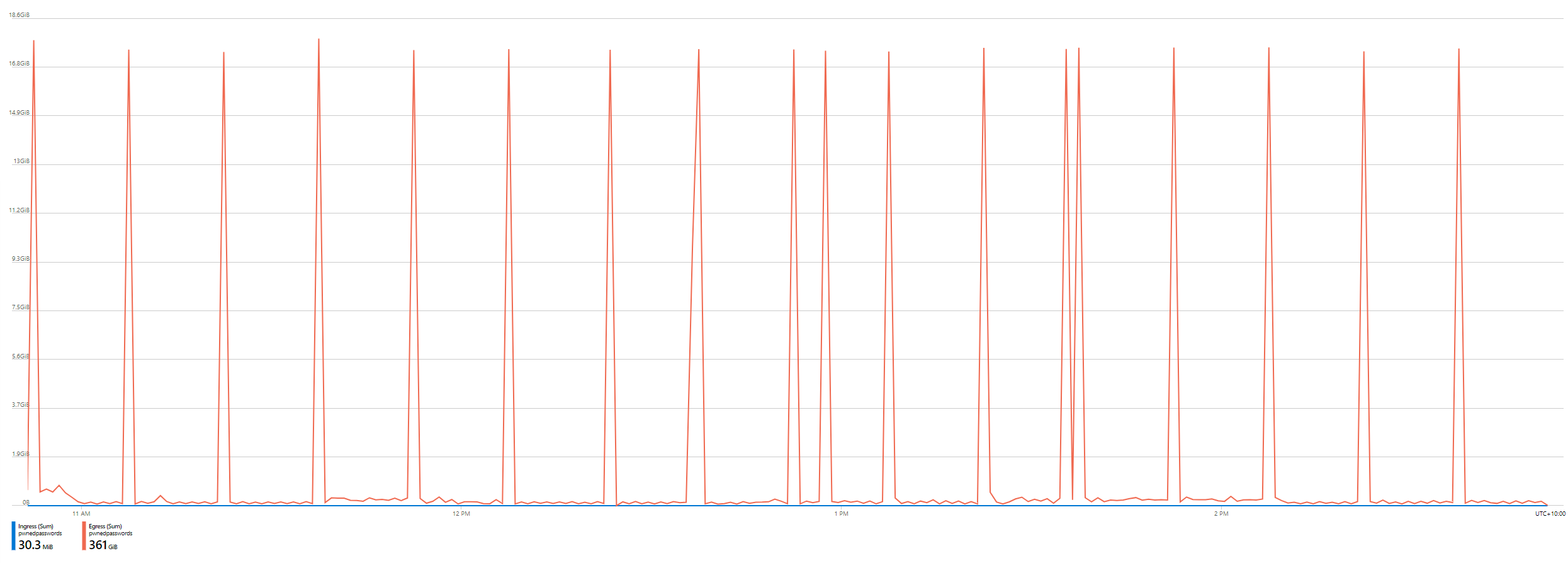

But in December 2021 that monster bill hit from Azure – by line item predominantly under the “bandwidth” service name with the bill meter for “data transfer out”. Drilling down, the bandwidth use was showing regular spikes of 17.3GB and looking even more closely, the requests were appearing highly regularly in his logs, stemming from a Cloudflare IP address confirming that it was routing through their infrastructure.

Quickly dropping in a firewall rule to provide a temporary bit of pain relief, Troy investigated his Cloudflare settings and couldn’t immediately spot the problem; looking at the property of the files being downloaded in Azure’s blog storage he also couldn’t see any changes. To cut a long story short, a setting on his Cloudflare plan that set the max cacheable file size to 15GB was ultimately to blame: the launch of the Pwned Passwords ingestion pipeline for the FBI along with hundreds of millions of new passwords provided by the NCA had meant HaveIBeenPwned had started hosting millions of new zip files; each of them 17.3GB big and slipping the net.

And just like that, Cloudflare stopped working its magic and Azure started the cash registers. A quick tweak to the Cloudflare rules fixed the issue. As Troy put it, “I removed the direct download links from the HIBP website and just left the torrents which had plenty of seeds so it was still easy to get the data. Since then, Cloudflare upped that 15GB limit and I've restored the links for folks that aren't in a position to pull down a torrent.

"Crisis over." (Bar that A$11,000 Azure bill...)

See also: Under pressure from Cloudflare’s R2, Wasabi et al, AWS massively expands its free data transfer tier

But he’s not cloud-bitter: “I always knew bandwidth on Azure was expensive and I should have been monitoring it better, particularly on the storage account serving the most data... before the traffic went nuts, egress bandwidth never exceeded 50GB in a day during normal usage which is AU$0.70 worth of outbound data.”

He has now set up an alert on the storage account for when that threshold is exceeded and a cost alert on Azure.

Critics will argue that he should have done this sooner but Cloudflare's performance arguably lulled him into a false sense of security: “I decided to alert both when the forecasted cost hits the budget, or when the actual cost gets halfway to the budget” he noted, adding “cost is something that's easy to gradually creep up without you really noticing, for example, I knew even before this incident that I was paying way too much for log ingestion due to App Insights storing way too much data for services that are hit frequently, namely the HaveIBeenPwned API.

"I already needed to do better at monitoring this and I should have set up cost alerts - and acted on them - way earlier. I guess I'm looking at this a bit like the last time I lost data due to a hard disk failure. I always knew there was a risk but until it actually happened, I didn't take the necessary steps to protect against that risk doing actual damage. But hey, it could have been so much worse; that number could have been 10x higher and I wouldn't have known any earlier.” (It may be time to double-check all your cloud settings. It can happen to the best of us.)

Have you suffered cloud bill shock at an enterprise or individual level? What are your top large-scale cloud cost management tips? We are all ears. Pop us an email, on or off the record.