NVIDIA says its new HGX H200 platform, which will be available from server companies and cloud service providers in Q2, 2024 “nearly doubles” inferences speeds on the open source LLama 2 language model; bringing to enterprise and cloud customers their own flavour of the insanely quick AI-powering hardware that is powering the likes of OpenAI.

The release is an updated version of the stand-alone H100 accelerator, but equipped with HBM3e memory, which the company will be calling the H200, allowing it to massively boost memory bandwidth and capacity.

The new HGX H200 platform, which is based on its Hopper architecture and which features the H200 Tensor Core GPU and HBM3e memory (understood to be from Micron) on one platform delivers 141GB of memory at 4.8 terabytes per second, nearly double the capacity and 2.4x more bandwidth compared with its predecessor, the NVIDIA A10.

Whilst the company has previously built OpenAI the custom H100 NVL GPU with powerful HBM3 memory the HGX H200 release is “the first GPU to offer HBM3e” it said in a press release on November 13.

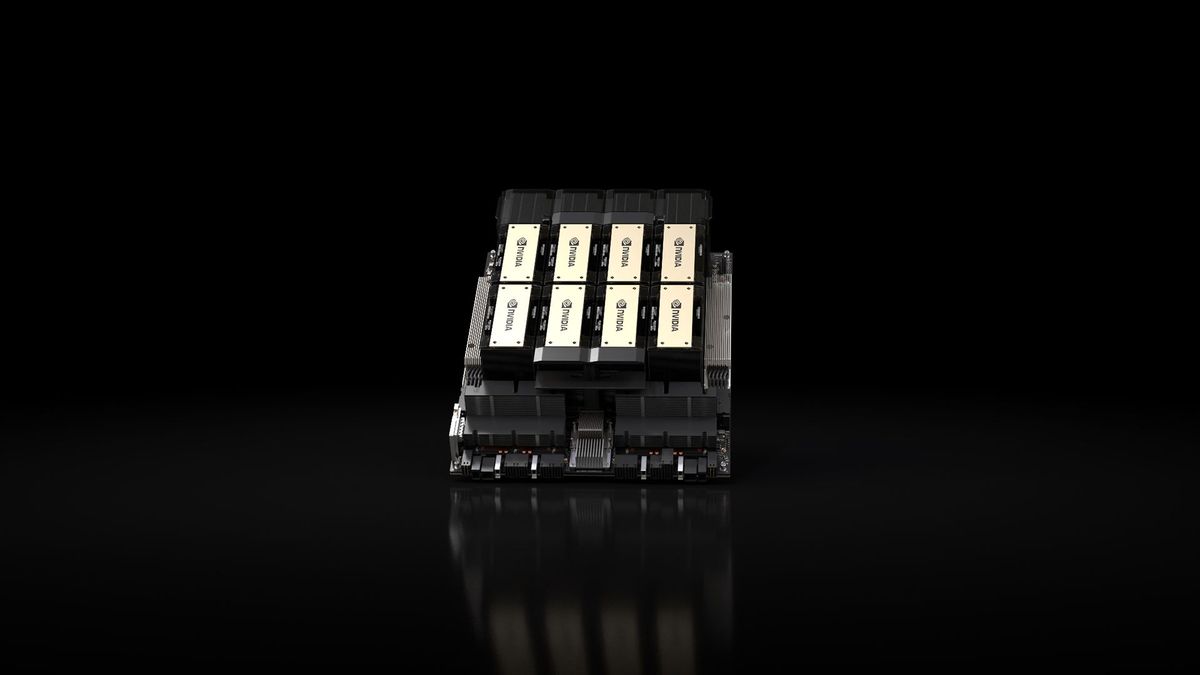

NVIDIA HGX H200: Four- and eight-way configurations shipping in Q2

The new platform will be available in NVIDIA HGX H200 server boards with four- and eight-way configurations, which are compatible with both the hardware and software of HGX H100 systems said NVIDIA, and also in the NVIDIA GH200 superchip with HBM3e, announced in August.

“With these options, H200 can be deployed in every type of data center, including on premises, cloud, hybrid-cloud and edge” the company said.

ASRock Rack, ASUS, Dell Technologies, Eviden, GIGABYTE, Hewlett Packard Enterprise, Ingrasys, Lenovo, QCT, Supermicro, Wistron and Wiwynn “can update their existing systems with an H200” it said .

"Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure will be among the first cloud service providers to deploy H200-based instances starting next year, in addition to CoreWeave, Lambda and Vultr” the company added in a November 13 press release.

An eight-way HGX H200 provides over 32 petaflops of FP8 deep learning compute and 1.1TB of aggregate high-bandwidth memory for the highest performance in generative AI and HPC applications.

(HBM3e is a standard for High Bandwidth Memory. Micron, Samsung, and SK Hynix have all pledged to start shipping it in 2024. As The Next Platform’s Timothy Prickett Morgan noted in 2022: “With HBM memory… you can stack up DRAM and link it to a very wide bus that is very close to a compute engine and drive the bandwidth up by many factors to even up to an order of magnitude higher than what is seen on DRAM attached directly to CPUs… [although it is] inherently more expensive…”)

Micron's 24 GB HBM3e modules are based on eight stacked 24Gbit memory dies made using the company's 1β (1-beta) fabrication process, Anand Tech recently noted, adding: “These modules can hit data rates as high as 9.2 GT/second, enabling a peak bandwidth of 1.2 TB/s per stack, which is a 44% increase over the fastest HBM3 modules available…”)

Micron said on its last earnings call that its “planned fiscal 2024 CapEx investments in HBM capacity have substantially increased versus our prior plan in response to strong customer demand for our industry-leading product”; CEO Sanjay Mehrotra told investors that Micron should capture “several hundred million dollars in our fiscal year '24” from HBM3e.