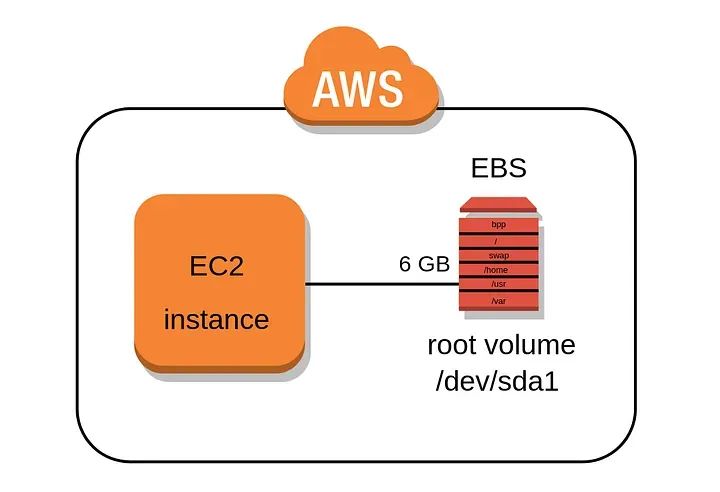

Developers frequently need to run applications on EC2 instances, a common approach with legacy or functionally complex apps in particular. The instances use Amazon EBS (Elastic Block Storage) as their permanent file systems. Since your applications will fail if your disks become full, some overprovisioning of EBS space is necessary.

It often happens that an EBS volume needs to be adjusted. For example, I once had to add a 4 TB volume per production instance to collect advanced logs about alleged bugs, writes Uri Naiman, Sales Engineering Team Lead at Zesty. But after I finished debugging, I no longer needed the volumes.

Running out of disk space is a serious risk that must be mitigated in order to prevent downtime and data loss. Determining the optimal size of the EBS volume can be extremely difficult. In some cases, I’ve needed to expand an EBS volume due to new application features requiring more disk space for debugging or for dealing with higher traffic, which can create more logs and temporal data than was initially anticipated.

Another common scenario is your application or database storage requiring more space over time. The safe bet is to provision way in excess of what you’re actually going to use to cover for all possible causes of data peaks, however, this will increase your cloud bill substantially.

When a software product is being developed, the focus is on delivery, while the exact requirements and costs are still unknown. In almost every company I’ve ever worked at, once the product was operational, we discovered large expenses in our cloud bill. This was often due to our EBS volume cost.

In addition to the hefty cloud bill, developers are often poised to dedicate valuable hours of the day to managing volumes manually. But this manual and repetitive task is a perfect opportunity for AI and machine learning to take over.

In this article, I’ll demonstrate how to shrink an EBS volume in order to lower your cloud bill. First, I’ll explain how to do this manually, a far more labor-intensive task than what may have been expected. Then, I’ll explain how this can be orchestrated automatically without downtime.

EBS Pricing Considerations

The cost of a single terabyte general-purpose disk (gp3) runs at least $80 per month. That means a dozen instances with such storage would set you back $1,000 per month. That’s not even taking into account any backups you’d need to make (priced at roughly 50% of gp3 storage cost). So this scenario is hardly pragmatic.

In order to save costs and be able to adjust continuously based on demand, you need to provide sufficient EBS storage without reserving too much. While in theory you could handle EBS sizes for just a small number of instances manually, the process is still tedious, requiring multiple steps. And once your system grows beyond a few instances, this simply becomes unmanageable without automation.

Expanding a Volume

Expanding an EBS volume in AWS involves submitting a request, where you can set the new size and tweak the performance parameters. But this approach has its limitations. For one, it requires a cooldown period of several hours between consequent modifications. While a reboot isn’t usually necessary, there have been times where I’ve needed to restart the instance to apply the changes or for the performance adjustments to take effect. There are also a few cases where changes to a desired volume configuration may not even be possible.

While the expansion of the volume can be automated, the customer must implement the required automation themselves, which can incur additional development and maintenance costs.

Shrinking a Volume

When peaks in demand subsist, shrinking EBS volumes to fit the reduced app demand would enable greater cost efficiency. Unfortunately, the size of an existing EBS volume cannot be decreased. Instead, it is possible to create a smaller volume and move the data using tools such as rsync, at the cost of pausing the system’s write operations during the migration.

Let’s take a look at how it’s done. We’ll consider an instance with two EBS volumes: one (root) for the system and another mounted to /data, for the application data:

$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/xvda1 16G 1.6G 15G 10% /

…

/dev/xvdb 7.8G 92K 7.4G 1% /data

The respective EBS volumes can be viewed using an AWS CLI command aws ec2 describe-volumes, which prints out instance and volume IDs. Suppose we determined that /data size was too big and wanted to replace it with a smaller volume.

Using AWS CLI, we can create a new small volume of 5 GB:

aws ec2 create-volume --availability-zone eu-west-1a --size 5 --volume-type gp3

The volume should be attached to the instance, after which it becomes visible in the system as /dev/xvdc:

aws ec2 attach-volume --volume-id "<small-volume-id>" --instance-id "<instance-id>" --device "/dev/xvdc"

Next, in order to continue, we need to log in to the instance and perform the following actions (Here, we’re using commands that work on Amazon Linux):

- Create directory /newdata and mount the block device that corresponds to <small-volume-id> :

sudo mount /dev/xvdc /newdata - Create a filesystem on the new block device, for instance, of type ext3:

sudo mkfs -t ext3 /dev/xvdc - Update /etc/fstab so new device is reattached on reboot (Note that depending on your particular setup and filesystem of choice, this line might look different):

echo "UUID=$(lsblk -no UUID /dev/xvdc) /data ext3 defaults 0 0" | sudo tee -a /etc/fstab

- Now that both /data and /newdata are mounted, we can migrate the content using rsync.

sudo rsync -aHAXxSP /data /newdata

By using these flags and specifying the appropriate source and destination paths, the rsync command will synchronize the files and directories between the source and destination—preserving attributes, hard links, ACLs, and EAs.

We must ensure that writes to /data don’t happen anymore to ensure consistency. To achieve that, we’ll most likely have to shut down our application during migration, leading to system downtime.

- Next, unmount /data and erase its line in /etc/fstab. We also need to remount the /newdata filesystem as /data, adjust the /etc/fstab accordingly, reboot the instance to verify the correct mount point, and conduct any necessary checks.

$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/xvda1 16G 1.6G 15G 10% /

…

/dev/xvdc 4.9G 140K 4.6G 1% /data

Migration is now complete, and we can destroy the decommissioned larger EBS volume:

aws ec2 detach-volume --volume-id <larger-volume-id>

aws ec2 delete-volume --volume-id <larger-volume-id>

As I’ve demonstrated, shrinking poses many challenges. You need to deal with the AWS infrastructure and reliably migrate the data between volumes. Most likely, the application should be terminated or brought into a read-only mode to avoid inconsistent migration, which often leads to downtime. If there are any human or coding errors, there is a risk that data may be corrupted.

Automatically Scale EBS Volumes to Demand with Machine Learning

The ability to shrink EBS volumes can greatly reduce overspending by utilizing machine learning based technology to automatically resize volumes in response to demand. This minimizes costs and eliminates the need to continuously monitor or tweak the capacity as demand fluctuates.

Applying automated solutions towards managing disk volumes has the benefit of working 24/7 so they can instantly respond to unexpected changes in demand, they save significantly more than manual management, and they don’t require any downtime when decreasing storage size. Plus, they provide the intangible value of alleviating the burden and stress of having insufficient capacity should a data peak suddenly occur.

Not only can intelligent automated solutions free up valuable time for cloud operations teams, it also eliminates human error by making data-driven decisions based on disk utilization metrics, ensuring precise cost optimization.

The FinOps industry has certainly embraced automated solutions to save time and money on compute but storage seems to largely get ignored. Companies can of course continue to have their DevOps teams manually shrink EBS volumes. Why would you preference that over automated management, that more consistently scales provisioned storage to used consumption, ensuring both consistently sufficient capacity and excellent storage cost savings?