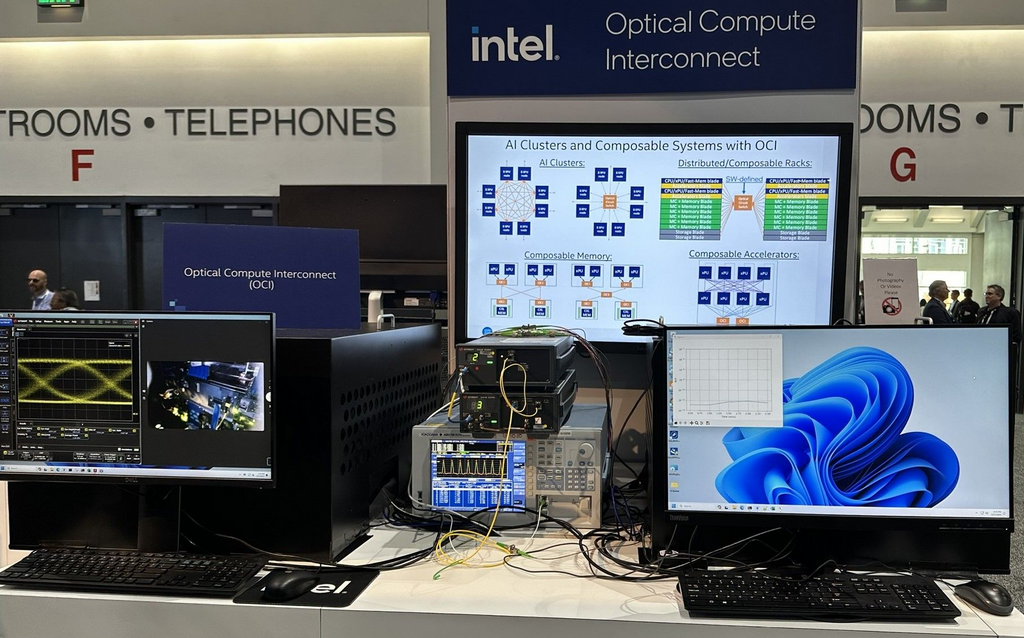

Intel has demonstrated a new optical compute interconnect (OCI) chiplet co-packaged with an Intel CPU running live data – at I/O performance levels it considers a “revolutionary milestone” for data centre workloads.

Whilst this is just a prototype, Intel said it is “working with select customers to co-package OCI with their SOCs as an optical I/O solution” – and the OCI chiplet, packaged here with an Intel CPU, could also be “integrated with next-generation CPUs, GPUs, IPUs and other SoCs.”

The package could let organisations running AI workloads have an alternative to the electrical I/O (i.e. copper trace connectivity) setups that support high bandwidth density and low power, but only offer short reaches of “about one meter or less” Intel explained of the OCI chiplet.

The demo showed 4 terabits per second (Tbps) bi-directional data transfer, compatible with PCIe Gen5.

See also: A bootleg API, AI’s RAM needs, cat prompts and GPU shortages: Lessons from scaling ChatGPT

It also consumed only 5 pico-Joules (pJ) per bit compared to pluggable optical transceiver modules at about 15 pJ/bit, Intel said, adding: “This level of hyper-efficiency is critical for data centers and… could help address AI’s unsustainable power requirements.”

Intel demoed the OCI chiplet at the Optical Fiber Communication Conference (OFC) 2024 today.

Thomas Liljeberg, a senior director in its Integrated Photonics Solutions (IPS) Group said: “The ever-increasing movement of data from server to server is straining the capabilities of today’s data center infrastructure, and current solutions are rapidly approaching the practical limits of electrical I/O performance.”

He added that it lets customers “seamlessly integrate co-packaged silicon photonics interconnect solutions into next-generation compute systems [and] promises to revolutionize high-performance AI infrastructure.”

A lot of work is going into networking requirements for AI-intensive data centre workloads, with Nvidia last year touting a 51Tb/sec Ethernet switch as part of an integrated platform tuned for GPT and BERT LLMs, distributed training and parallel processing, natural language processing, computer vision, high-performance simulations and data analytics.

The Intel first OCI chiplet is designed to support 64 channels of 32 gigabits per second (Gbps) data transmission in each direction on up to 100 meters of fiber optics, Intel said and could enable “future scalability of CPU/GPU cluster connectivity and novel compute architectures, including coherent memory expansion and resource disaggregation.”

Earlier this month the Semiconductor Industry Association (SIA) reported global semiconductor industry sales of $46.4 billion for April 2024, an increase of 15.8% compared to the April 2023 total of $40.1 billion.

It predicts that annual global sales will hit a record $611.2 billion in 2024.