LLMs

"Building production-grade RAG remains a complex and subtle problem... unlike traditional software, every decision in the data stack directly affects the accuracy of the full LLM-powered system."

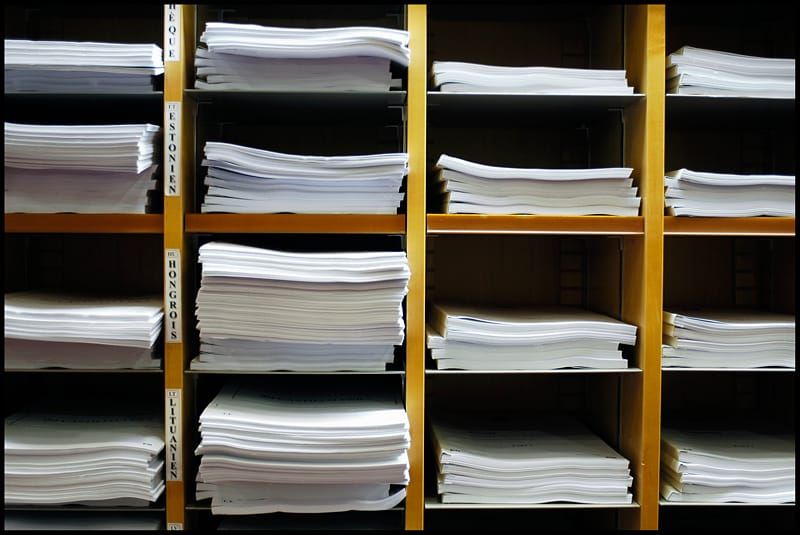

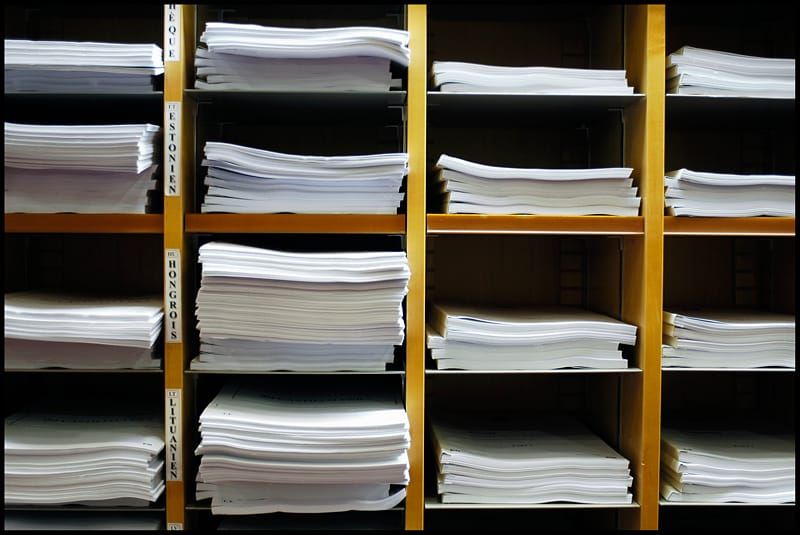

LlamaIndex – a startup that has rapidly found itself at the heart of the burgeoning generative AI toolchain – has launched a brace of new services including one, LlamaParse, that helps users build Retrieval Augmented Generation (RAG) workflows “over complex documents that can answer questions over both tabular and unstructured data.”

More simply, it helps users pull unstructured data from PDFs and feed them into Large Language Models; using what the company described this week as “a proprietary parsing service that is incredibly good at parsing PDFs with complex tables into a well-structured markdown format.”

(Whilst The Stack does not regularly cover product launches from vendors, LlamaIndex’s rapid work improving the maturity of an exciting but complex and challenging generative AI toolchain deserves attention.)

Join peers managing over $100 billion in annual IT spend and subscribe to unlock full access to The Stack’s analysis and events.

Already a member? Sign in