Cloud

"The team followed what I consider to be best practice..."

The Prime Video team published this story: Scaling up the audio/video monitoring service and reducing costs by 90%, and the internet piled in with opinions and bad takes, mostly missing the point.

What the team did follows the advice I’ve been giving for years (here’s a video from 2019):

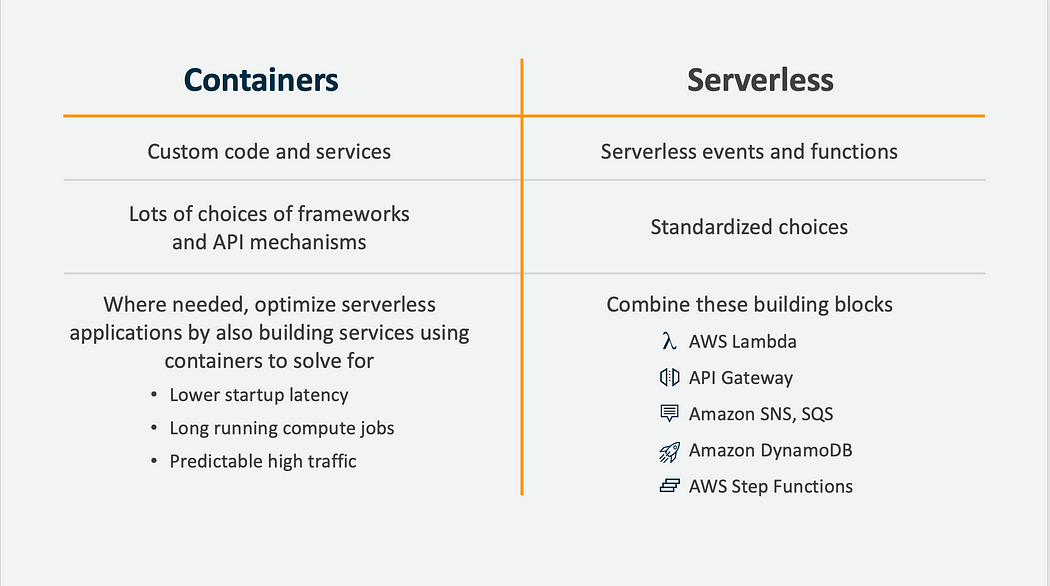

“Where needed, optimize serverless applications by also building services using containers to solve for lower startup latency, long running compute jobs, and predictable high traffic”

The Prime Video team had followed a path I call Serverless First, where the first try at building something is put together with Step Functions and Lambda calls, writes Adrian Cockcroft.

They state in the blog that this was quick to build, which is the point. When you are exploring how to construct something, building a prototype in a few days or weeks is a good approach.

Then they tried to scale it to cope with high traffic and discovered that some of the state transitions in their step functions were too frequent, and they had some overly chatty calls between AWS lambda functions and S3.

They were able to re-use most of their working code by combining it into a single long running microservice that is horizontally scaled using ECS, and which is invoked via a lambda function.

Join peers managing over $100 billion in annual IT spend and subscribe to unlock full access to The Stack’s analysis and events.

Already a member? Sign in