Back in September 2020 VMware announced what it called Project Monterey as a “material rearchitecture” of infrastructure software for its VMware Cloud Foundation (VCF) -- a suite of products for managing virtual machines and orchestrating containers. The project drew in a host of hardware partners, as the virtualisation heavyweight looked to respond to the way in which cloud companies were increasingly using programmable hardware like FPGAs and SmartNICs to power data centre workloads that could be offloaded from the CPU -- in the process freeing up CPU cycles for core enterprise application processing work and improving performance for the networking, security, storage and virtualisation workloads offloaded to these hardware accelerators.

Companies including numerous large VMware customers looking to keep workloads on-premises had struggled to keep pace with the way in which cloud hyperscalers were rethinking data centre architecture like this. Using the kind of emerging SmartNICs, or what are increasingly called Data Processing Units (DPUs) was requiring extensive software development to adapt them to the user’s infrastructure. Project Monterey was an ambitious bid to tackle that from VMware’s side in close collaboration with SmartNIC/DPU vendors Intel, NVIDIA and Pensando (now owned by AMD) and server OEMs Dell, HPE and Lenovo in order to improve performance.

This week Project Monterey bore some very public fruit, with those server OEMs and chipmakers lining up to offer new products optimised for VMware workloads and making some bold claims about total cost of ownership (TCO) savings as well as infrastructure price-performance -- ultimately, by relieving server CPUs of networking services and running infrastructure services on the DPU isolated from the workload domain, security will also markedly improve, all partners emphasised, as will workload latency and throughput for enterprises.

Project Monterey: Harder, Better, Faster, Stronger?

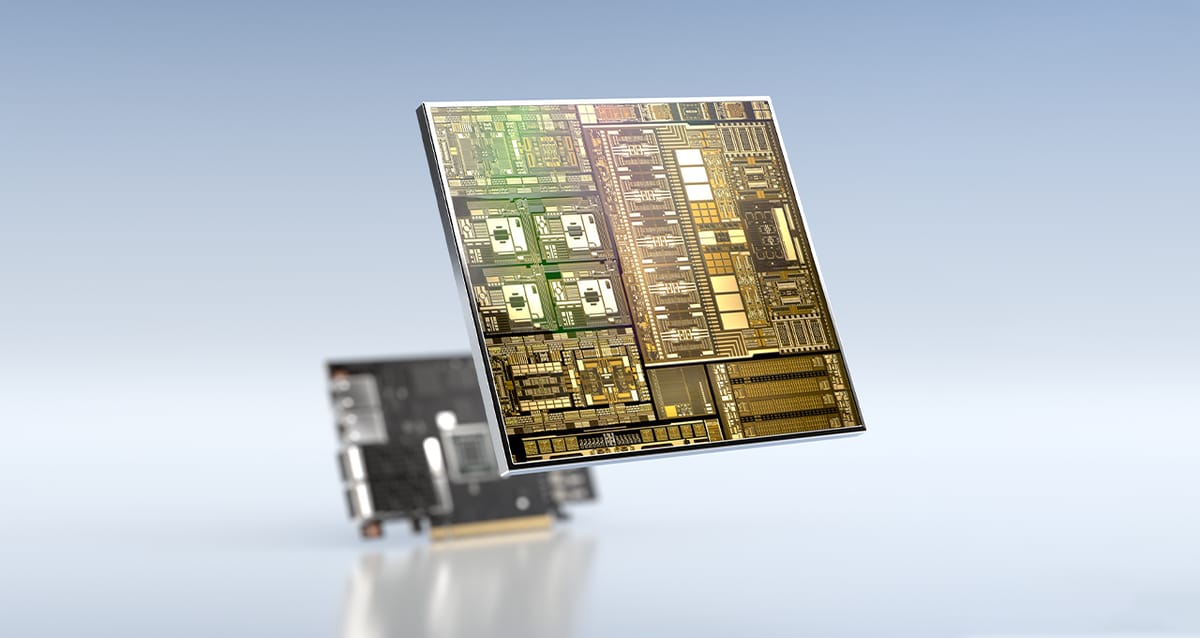

NVIDIA, for example, thinks using its new data processing units (DPUs) can save $8,200 per server in total cost of ownership (TCO) terms – or $1.8 million in efficiency savings over three years for 1,000 server installations. (A single BlueField-3 DPU replaces approximately 300 CPU cores, NVIDIA CEO Jensen Huang has earlier claimed.) Customers can get the DPUs with new Dell PowerEdge servers that start shipping later this year and which are optimised for vSphere 8: ““This is a huge moment for enterprise computing and the most significant vSphere release we’ve ever seen,” said Kevin Deierling, a senior VP at Nvidia. “Historically, we’ve seen lots of great new features and capabilities with VMware’s roadmap. But for the first time, we’re introducing all of that goodness running on a new accelerated computing platform that runs the infrastructure of the data centre.”

(The BlueField 3 DPUs accelerating these VMware workloads feature 16 x Arm A78 cores, 400Gbit/s bandwidth and PCIe gen 5 support accelerators for software-defined storage, networking, security, streaming, line rate TLS/IPSEC cryptography, and precision timing for 5G telco and time synchronised data centres.)

"Certain latency and bandwidth-sensitive workloads that previously used virtualization 'pass-thru' can now run fully virtualized with pass-thru-like performance in this new architecture, without losing key vSphere capabilities like vMotion and DRS" said VMware CEO Raghu Raghuram. Infrastructure admins can rely on vSphere to also manage the DPU lifecycle, Raghuram added: “The beauty of what the vSphere engineers have done is they have not changed the

management model. It can fit seamlessly into the data center architecture of today..."

The releases come amid a broader shift to software-defined infrastructure. As NVIDIA CEO Jensen Huang has earlier noted: "Storage, networking, security, virtualisation, and all of that... all of those things have become a lot larger and a lot more intensive and it’s consuming a lot of the data centre; probably half of the CPU cores inside the data center are not running applications. That’s kind of strange because you created the data center to run services and applications, which is the only thing that makes money… The other half of the computing is completely soaked up running the software-defined data centre, just to provide for those applications. [That] commingles the infrastructure, the security plane and the application plane and exposes the data centre to attackers. So you fundamentally want to change the architecture as a result of that; to offload that software-defined virtualisation and the infrastructure operating system, and the security services to accelerate it…”

Vendors are lining up to offer new hardware and solutions born out of the VMware collaboration meanwhile. Dell's initial offering of NVIDIA DPUs is on its VxRail solution and takes advantage of a new element of VMware's ESXi (vSphere Distributed Services Engine), moving network and security services to the DPU. These will be available via channel partners in the near future. Jeff Boudreau, President, Dell Technologies Infrastructure Solutions Group added: “Dell Technologies and VMware have numerous joint engineering initiatives spanning core IT areas such as multicloud, edge and security to help our customers more easily manage and gain value from their data.”

AMD meanwhile is making its Pensandu DPUs available through Dell, HPE and Lenovo. Those with budgets for hardware refreshes and a need for performance for distributed workloads will be keeping a close eye on price and performance data as the products continue to land in coming weeks and months.