AI algorithms struggle to recognise events or objects in contexts that are different from the training set. (As philosopher Douglas Hofstader put it in one interview: "If you look at situations in the world, they don’t come framed, like a chess game or a Go game or something like that. A situation in the world is something that has no boundaries at all, you don’t know what’s in the situation, what’s out of the situation.")

If you're trying to train an AI to deal with this "unframed" world, you run into a lot of challenges. Humans learn about causal relationships by making interventions/actions in a given environment, observing the result, then refining the mental model they've "built" by making similar actions in subtly different environments in the great, fluid thing that is The World. It's hard to build AI training sets that can help algorithms "understand" the myriad causal relationships taking place at any given time in a similar way; rather than train them to understand more fixed patterns of behaviour: e.g. the hard numbers that need to be crunched to beat a human in a game of tightly circumscribed mathematical probabilities like chess.

Machine learning researchers are are none-the-less increasingly focussed on developing models that involve causal reasoning -- algorithms which learn to understand "what affects what".

See also: AI outperforms humans in chip design breakthrough

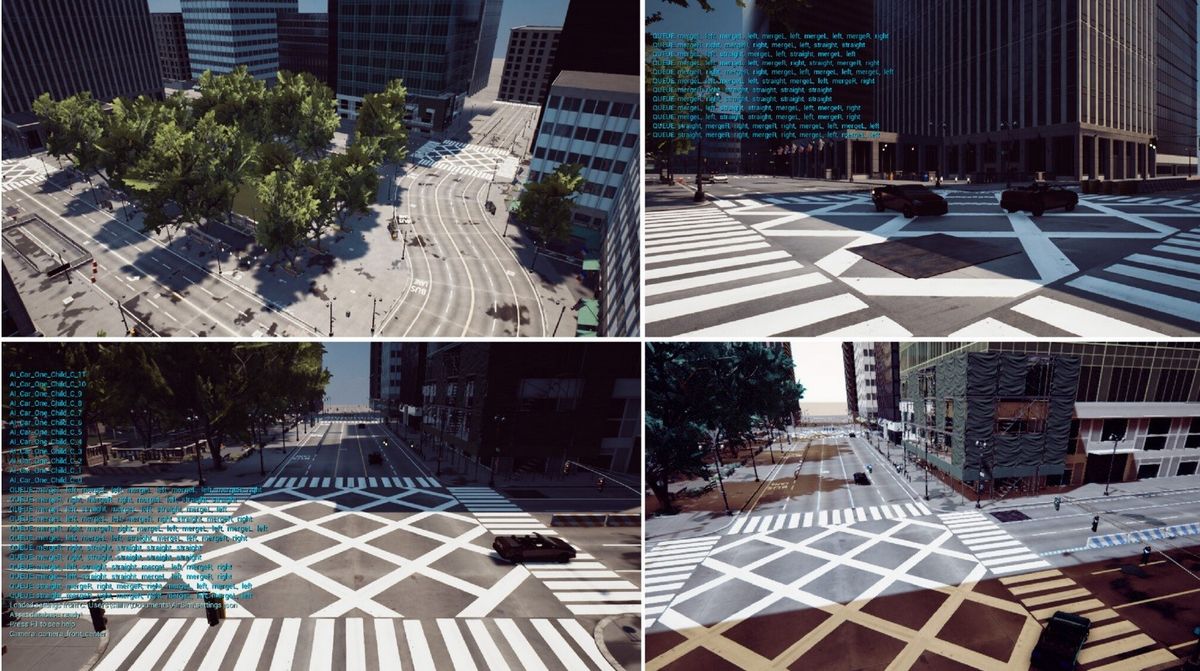

In a bid to help, a team of researchers at Microsoft have built and released a "high-fidelity simulation environment" they are dubbing CausalCity, "with AI agent controls to create scenarios for causal and counterfactual reasoning" to improve training. Their simulation -- focussed on algorithms for self-driving vehicles, but applicable beyond that domain -- lets user introduce "confounders" to the environment, e.g tweaking the relationship between vehicle speed/stopping distance/the amount of water on the roads.

The aim: let researchers replicate a scenario scenario several times, e.g. for a self-driving vehicle "with different interventions to create 'ground-truth' data for a 'What would have happened if...?' question".

Or as they put it of their work -- published as code on GitHub and as a paper here -- CausalCity creates a simulation environment "designed to be applicable to studying causal reasoning generally; however, the specific environment we chose is that of driving."

The research involved generating synthetic datasets for detecting/predicting car accident, as well as generating specific actions for predicting driver maneuvers, pedestrian intentions, front car intentions, traffic rule violations, and accidents scenario. The research -- training was carried out on a single NVIDIA P100 GPU, with the team evaulating "three state-of-the-art causal inference algorithms" (NRI, NS-DR, and V-CDN) -- drives home how hard it remains for AI to do causal discovery, with even the state-of-the-art benchmarks struggling to predict the trajectory and behaviour of the cars "to some degree".

By sharing the CausalCity code and simulation, the Microsoft Research team say they hope that it can be used to refine the domain of AI "causal reasoning, discovery and counterfactual reasoning...For example, our simulation enables a 'hero' vehicle to be used to create targeted interventions in the scene and such a method could be used to test the ability for algorithms to reason counterfactually (i.e., what would have happened if the hero vehicle did not stop at the traffic signal?). As this is a simulation it is possible to generate a scene with the same initial starting conditions but to strategically intervene with a specific action at a specific moment."

With some Python savvy, data scientists can play with CausalCity here.

As the researchers note, "the scenarios created in our simulation are complex and do have reasonable visual fidelity, but the are still a long way from simulating realistic behavior of drivers" --- which as most of us regularly on the roads know, falls firmly into Hofstader's definition of "a situation in the world... that has no boundaries at all."