Microsoft has released the private preview of a tool called Security Copilot it says was “shaped by the power of OpenAI’s GPT-4 generative AI” – with Azure CTO Mark Russinovich dubbing it a “new era in cybersecurity.”

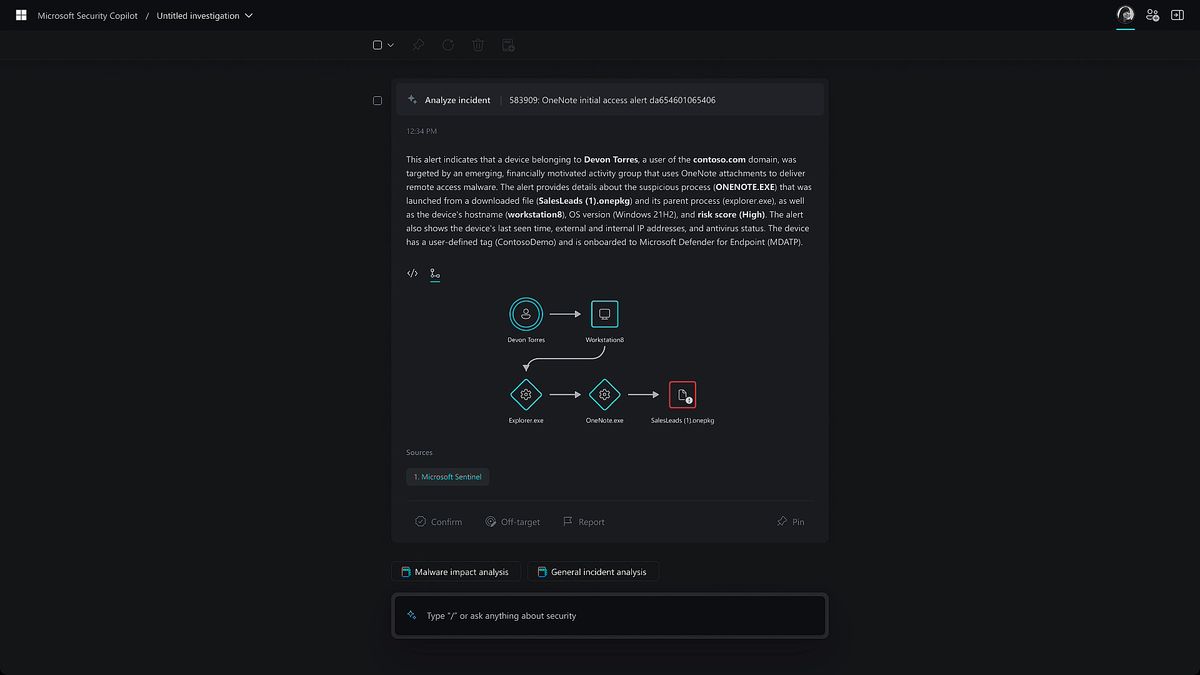

Microsoft said Security Copilot furnishes “critical step-by-step guidance and context through a natural language-based investigation experience that accelerates incident investigation and response.”

Using a chat interface it purportedly lets users “summarize any event, incident, or threat in minutes and prepare the information in a ready-to-share, customizable report” and “identify an ongoing attack, assess its scale, and get instructions to begin remediation based on proven tactics from real-world security incidents.”

The release comes despite the persistent problem generative AI including GPT-4 has with “hallucinating” or making up facts. It came the same day that it laid off thousands of staff, with security and identity teams hit.

https://twitter.com/merill/status/1640586175802118145

Security Copilot

In a post authored by Vasu Jakkal, corporate VP of security, compliance, identity, and management, Microsoft acknowledged that “AI-generated content can contain mistakes. But Security Copilot is a closed-loop learning system, which means it’s continually learning from users and giving them the opportunity [to feedback].”

As TechCrunch’s Kyle Wiggers emphasises, Microsoft doesn’t specify explicitly how Security Copilot incorporates GPT-4. Microsoft added that “your data is never used to train other AI models” which may or may not reassure the growing numbers of CISOs are being asked to find ways to block access to ChatGPT for fear that developers and others might upload intellectual property into it during their testing or deployments.

Microsoft meanwhile has eviscerated security and identity staff as part of planned sweeping layoffs.

Merill Fernando, Principal Product Manager, Azure Active Directory said on Twitter: “My team at Microsoft has been gutted. With half my immediate team gone and more across Identity it is the end of an era.”

In other, unrelated news, a taxpayer-funded organisation, CISA, just released not its first but its second novel “incident response tool that adds novel authentication and data gathering methods in order to run a full investigation against a customer’s Azure Active Directory (AzureAD), Azure, and M365 environments.”

Why? In large part because AD security is hard (as one security pro puts it, "Azure environments are chock full of attack paths") and Microsoft’s own tools often inadequate to the task. As another notes: "[A factor that makes] Active Directory vulnerable to having attack paths is its complexity and lack of transparency.

"AD administrators have a wide range of options for granting permissions to accounts, including both directly and through Active Directory security groups, and Group Policy offers literally thousands of settings that also affect access control. At the same time, it’s nearly impossible to accurately audit permissions to determine, for example, who has permissions on a particular AD object or what effective rights have been granted to a security group.

"Similarly, it can be hard to properly manage permissions to Group Policy objects (GPOs); you need to consider not only permissions the GPO itself, but to the Group Policy Creator Owners group, the System/Policies folder in AD, the sysvol folder on domain controllers (DCs), and the gpLink and gpOptions attributes on the domain root and organizational units (OUs). Furthermore, it’s a real challenge to uncover and overcome issues like inheritance blocking, improper link priority and failure to place computers in the correct OU" as Quest's Bryan Patton puts it.

It was not immediately clear of Security Copilot will be legitimately useful at supporting AD and AzureAD hardening, but if it is, the cynics might just start taking notice.