Nutanix, a hyperconverged infrastructure (HCI) specialist, has been progressively building more and more of its own software on Kubernetes, said Chief Technology Officer (CTO) Manosiz Bhattacharyya, speaking with The Stack during a media briefing at the company’s NEXT conference.

“Everything that we have built new is actually on containers and Kubernetes. What we are now doing is also breaking up Prism Central [Nutanix’s central control plane]. It contains a lot of applications, along with management of HCI, it has our network controller, self-service. We're breaking these components into Kubernetes clusters. Each of these control plane parts can now be be managed and upgraded independently.

The CTO said: “We're giving a lot more flexibility to [our] app developers, who have actually in some ways been liberated from Prism, because Prism was one place where we had to deliver everything – that was becoming very difficult as the product started getting bigger and bigger.”

A platform engineering focus

It’s an example, in internal microcosm, of where many of the company’s customers also find themselves, he added – a classic shift from monolith to microservices, with enterprises containerising more and more of their applications, then creating platform engineering teams to serve reusable components and platform resources to the developers delivering them.

Thomas Cornely, SVP, Product Management, drives home the point: “What we lived through is a perfect example of what we see in large-scale companies that embraced DevOps, and let developers do whatever they want. Everybody was building on Kubernetes – that's great. But then, who's in charge of maintaining that stack, securing that stack? When there was something you needed as a service that was not part of the core stack you [developers] went and got something out of open source.

“Is it the right one now five years in? Are you supporting it yourself? We've gone through this internally. We've had to rationalise and actually get better at it [and] say there's a platform team that is going to own this.”

It’s a working shift that was also tidily captured by Saxo Bank’s Chief Cloud Architect Jinhong Brejnholt in an earlier interview with The Stack.

Brejnholt emphasised how ““[With cloud-native] you need to learn not just how to package your code, but configure all infrastructure requirements together to deploy into our environment – which is super secure, meaning you cannot just ‘exec’ into the pod and start investigating [issues]. Our production environments need to be locked down way better than that! But developers are very used to thinking ‘I have a server; I have an issue; I’m going to login and check the logs.’”

This can be even more challenging given that “Things happen so fast in the Kubernetes world; components are swapping out all the time, so you have to keep an eye on the trends and tools and not just stick with the same components or you can get outdated” – all of which requires a really switched on cloud-native platform team to support developers.

From VMs to containers…

The Nutanix executives’ comments came as the company announced a new Nutanix Kubernetes Platform for customers that lets users manage multiple clusters; whether on Nutanix on-premises, or clusters running in non-Nutanix environments, including hyperscaler Kubernetes services. (“Top-to-bottom declarative APIs, integrated GitOps workflow, built-in security, centralized multi-cluster and multi-cloud fleet management.”)

“It’s an opportunity to run in a containerized manner on infrastructure delivered by someone else,” as Nutanix SVP Lee Caswell put it pithily – and is the first time that Nutanix has made parts of its HCI stack available outside a hypervisor delivered or managed by the company.

The announcement was swiftly followed by the news that Nutanix would also be making its storage stack available as a native service on AWS’s Elastic Kubernetes Service (EKS). Kubernetes, it is clear, retains its draw as a way to run applications anywhere – and increasing recognition that K8s can run stateful workloads, like a database, continue to help it grow.

(As DataStax’s Patrick McFadin puts it, ultimately “Kubernetes is a powerful way to create virtual data centers quickly and efficiently.”)

Nutanix + Kubernetes: What's new?

Nutanix CEO Rajiv Ramaswami told The Stack that his company already “had the Kubernetes distribution from a runtime perspective.

“What we did not have, or what's new with NKP are, I would say largely two things. One is the ability to orchestrate all the other pieces of the puzzle you need to get a Kubernetes environment going. In addition to the base Kubernetes you need load balancers, you need roles-based access control, you need a networking stack. There are open source components available for all of these things, but they have to be stitched together; we automate all of that. Number two, is providing visibility and management of clusters in a consistent way wherever the clusters are running. Your clusters might be running on Amazon or Azure or on-prem, or on the edge. And with NKP, you get a consistent single pane of glass through which you can manage all those clusters,” says Nutanix’ CEO.

He echoes his CTO’s experience: “When we got started with Kubernetes deployments internally… we are a software company, and our engineers put a lot of that stuff together themselves right! But here's the thing that I ask even for those engineering teams: 'Is that the most productive use of your… time?' because for me, the job of the developer is to build products that I ship, not to spend time on internal infrastructure environments, because that is actually making them less productive.”

Nutanix has, as a result and born of its own experience, looked to create almost what looks like an ultimate platform engineer’s hub for VMs and containers across a variety of environments, including public cloud.

Centralising everything via that “single pane of glass” sounds operationally compelling. But will customers not fear lock-in?

Ramaswami said that all Nutanix’ “Kubernetes management is built with open source components. So there is no lock-in in the sense, these are the same open source components that are available as part of the CNCF, we just stitched them together and we make sure they all work together. There'll be people who want to do it themselves, which is fine. For the people who don't want to do it themselves, we automate everything.”

You could, he insists, disaggregate these components if you wanted to exit, easily enough (although skills can always be a challenge in such circumstances.). He added: “We now [have] three components of this modern app platform. NKP; data services at the storage level, and then PaaS services. We don't say all three have to be together; you can mix and match, you can use OpenShift, along with our PaaS or data services, for example. Our whole philosophy is around providing choice and flexibility.”

Nutanix’s CEO sounds abruptly ready to move on: “The future” he says, is “modern applications and AI. The bread and butter here… We talked about VMware, we talked about migrations, infrastructure, all of that, right? But five years out, people are going to be focused not as much on the infrastructure but as on building these applications, enabling AI.”

The Stack sounds a sceptical note about an AI bubble.

Ramaswami is quick to respond: “Oh there’s definitely an AI bubble that's going to pop! But I do think there will be [more and more] legitimate use cases that require good financial diligence and bring TCO benefits.

“We are starting to see significant value for generative AI in enterprise use cases. But you have to plan them carefully, implement them carefully. I do feel like we are in this bubble cycle with respect to valuations…”

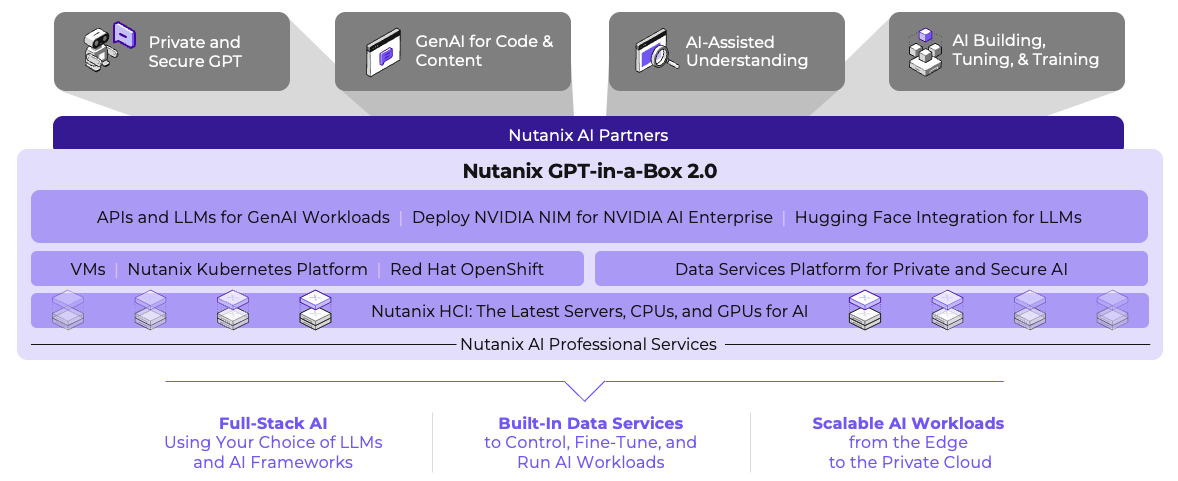

Cutting through the hype to start exploring use cases makes it all the more important that organisations can start testing generative AI out simply, he said, touting Nutanix’s new GPT-in-a-Box 2.0 as making LLMs “more enterprise ready and simple to use. We call it one click simplicity for people to be able to download a model from a repository like Nvidia or Hugging Face, instantiate an AI inference endpoint and connect an application to it, automate all of that, and deliver that rich GPT toolbox.”

Yes, you can do this with Kubernetes

Sign up for The Stack

Interviews, Insight, Intelligence for Digital Leaders

No spam. Unsubscribe anytime.