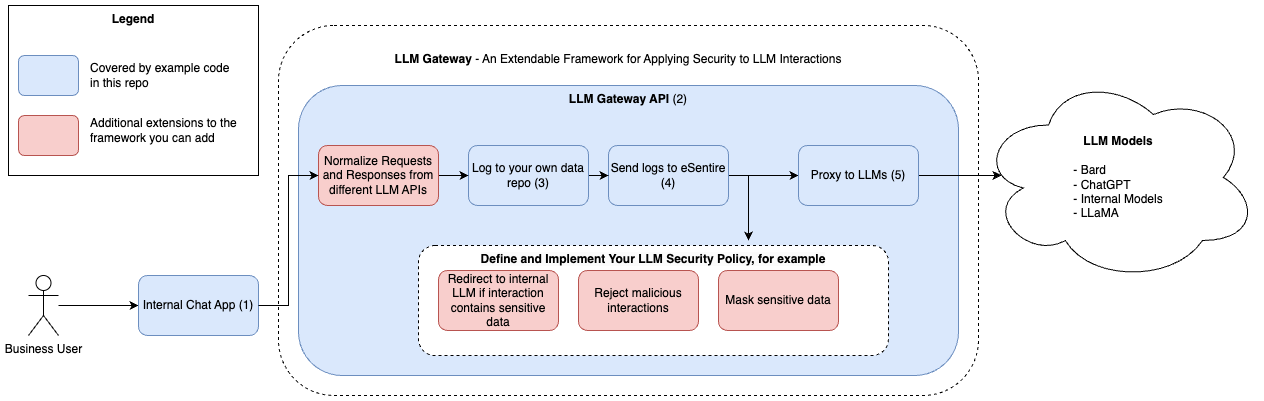

Canadian managed detection and response (MDR) specialist eSentire has open sourced a framework designed to ensure safer use of LLM. The framework is based on its use of AI deployments in its own operations.

eSentire says that its open source LLM gateway will help companies better manage AI model security. Its release is part of a larger push by the company to advance security within the LLM and broader AI fields.

"You can write a function that calls an LLM, but you can't inspect how the LLM is going to make a decision, just like asking for user input," eSentire Labs VP Alex Feick told The Stack. "Defenders must consider that any output that is returned from an LLM could be hostile."

While normal IT threats may include things such as network intrusions, securing AI requires paying special attention to data. To that extent, eSentire says that its gateway can help detect common attacks such as data poisoning that can thwart LLMs and AI implementations.

"The customer will be notified, and via our other [managed detection and response] capabilities eSentire could take containment actions at the User or Asset level," said Feick.

"Being able to detect LLM 'poisoning' requires organisations to have some form of LLM gateway so your company’s LLM interactions can be logged and reviewed by security teams."

Part of the secret to that visibility is the use of open source software. Feick said that by design the eSentire gateway is open source in order to provide better visibility into an emerging field.

"We have made it open source because we want to give users better transparency in how LLM logging and other security measures can be implemented within an LLM gateway," Feick explained.

"We wanted to provide security practitioners with a prototype so they could better understand the value of a gateway and explore the capabilities an organization might want to look for when choosing a commercial gateway."

eSentire is making its Gateway code available on GitHub.