On January 12, Microsoft detected an uninvited and malevolent guest in its corporate IT systems. Within days it attributed the raid on emails of its “senior leadership” and cybersecurity team to Russia’s Foreign Intelligence Service, or SVR. The group has been attacking IT service providers in Europe and the US – HPE is another victim – and double-digit numbers of organisations are anticipated to disclose breaches in coming weeks.

Microsoft on January 25 published a swift analysis of how the SVR hit its systems and said it was notifying other victims. Not everybody was impressed. As former Facebook CSO Alex Stamos put it: “Microsoft's language here plays this up as a big favor they are doing the ecosystem by sharing their ‘extensive knowledge of Midnight Blizzard’” (Microsoft’s term for the threat actor) “when, in fact, what they are announcing is that this breach has affected multiple tenants of their cloud products.”

Stamos, who now works at Microsoft rival SentinelOne, said the attackers’ technique “only work[s] on Microsoft-hosted cloud identity and email services… other companies were compromised using the same flaws in Entra (better known as Azure Active Directory) and Microsoft 365.”

Microsoft’s January 19 post-mortem was thin on details about how the attackers moved from cracking the password on what Microsoft described as a “legacy, non-production test tenant account” to the emails of its senior leaders. But security researchers are starting to fill in the gaps, and lessons abound for those looking to improve their enterprise security; even if being targeted by a nation state seems like a remote prospect.

(Microsoft is a $211 billion annual revenue company with a large security team. If it can leave neglected “legacy” test tenants sitting without MFA, so can anyone. If there is a widely repeatable path from such a tenant to Domain Admin, it serves everyone to understand what that might be.)

Below is a synopsis of the attack, some informed speculation about the attackers’ lateral movement that may be useful for network defenders, why Microsoft is facing blistering criticism despite its swift response, and some hopefully clear and actionable takeaways for security professionals.

The attack on Microsoft, digested

1: The SVR breached a Microsoft “test tenant” account, left sitting with no MFA and without a robust password. They tailored their “password spray” attacks to a limited number of accounts, using a low number of attempts to evade detection and avoid blocks based on the volume of failures. They attacked via a “distributed residential proxy infrastructure” – routing traffic through a “vast number” of IP addresses to evade detection – and eventually hit paydirt. (Microsoft has not said what the password was or how many attempts it took before the hackers hit the jackpot.)

Join peers following The Stack on LinkedIn

2: Once they compromised a user account, they found a “legacy” test OAuth application that had “elevated access to the Microsoft corporate environment.” Microsoft does not name this application in its report. The SVR then spun up new malicious OAuth applications, a new user account to grant consent to them and ultimately landed the Office 365 Exchange Online full_access_as_app role, which allows access to mailboxes. (There’s lots of gaps even in its full-fat version of this lateral movement, not just our short synopsis. More details for security teams are below…)

MS Graph to blame?

So: One minute the attackers were chipping away at passwords to some exposed tenant, the next they were merrily reading everyone's emails. What was this legacy test OAuth application that had “elevated access to the Microsoft corporate environment.” What can we learn here?

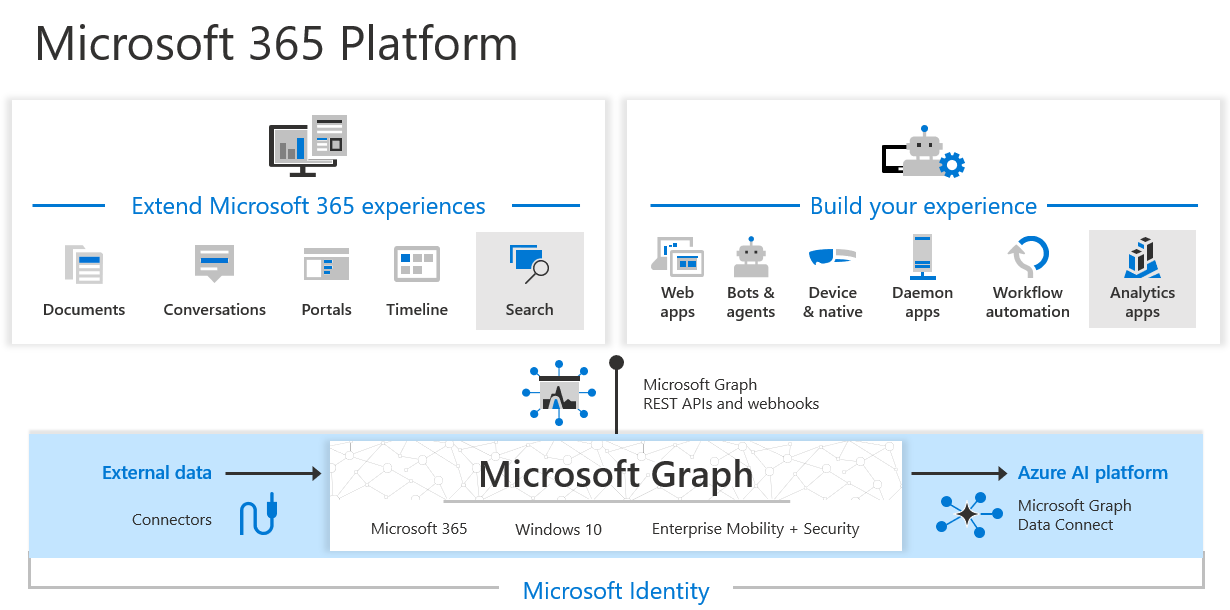

Speculation is mounting that the attackers may have made use of MS Graph, which Microsoft describes in its literature as “the gateway to data and intelligence in Microsoft 365” and which powers “the Microsoft 365 platform.” (It was previously known as the Office 365 Unified API and exposes REST APIs and client libraries to access data on a sweeping range of Microsoft cloud services, including emails via Outlook/Exchange, and security tools like Microsoft Entra ID, Identity Manager, and Intune.)

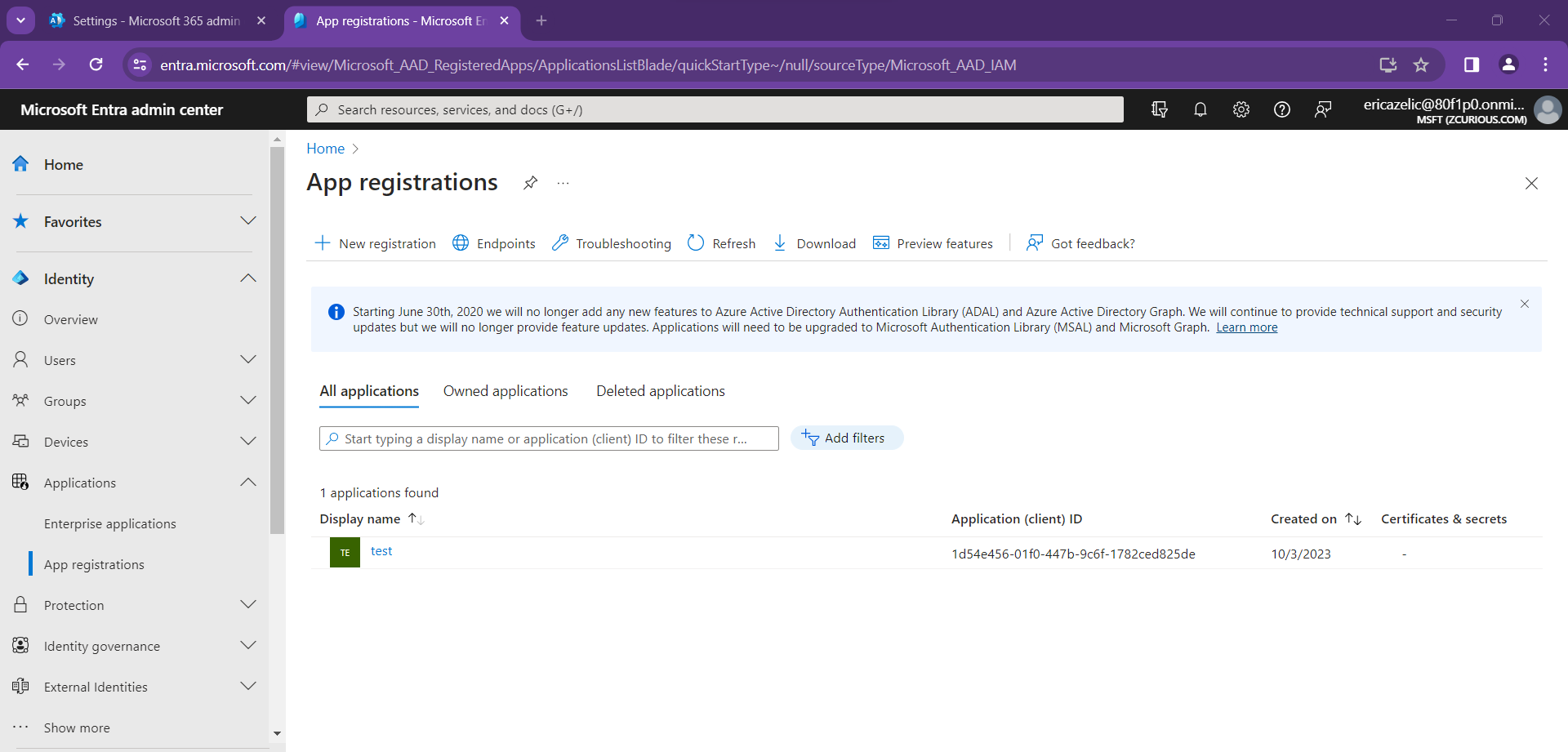

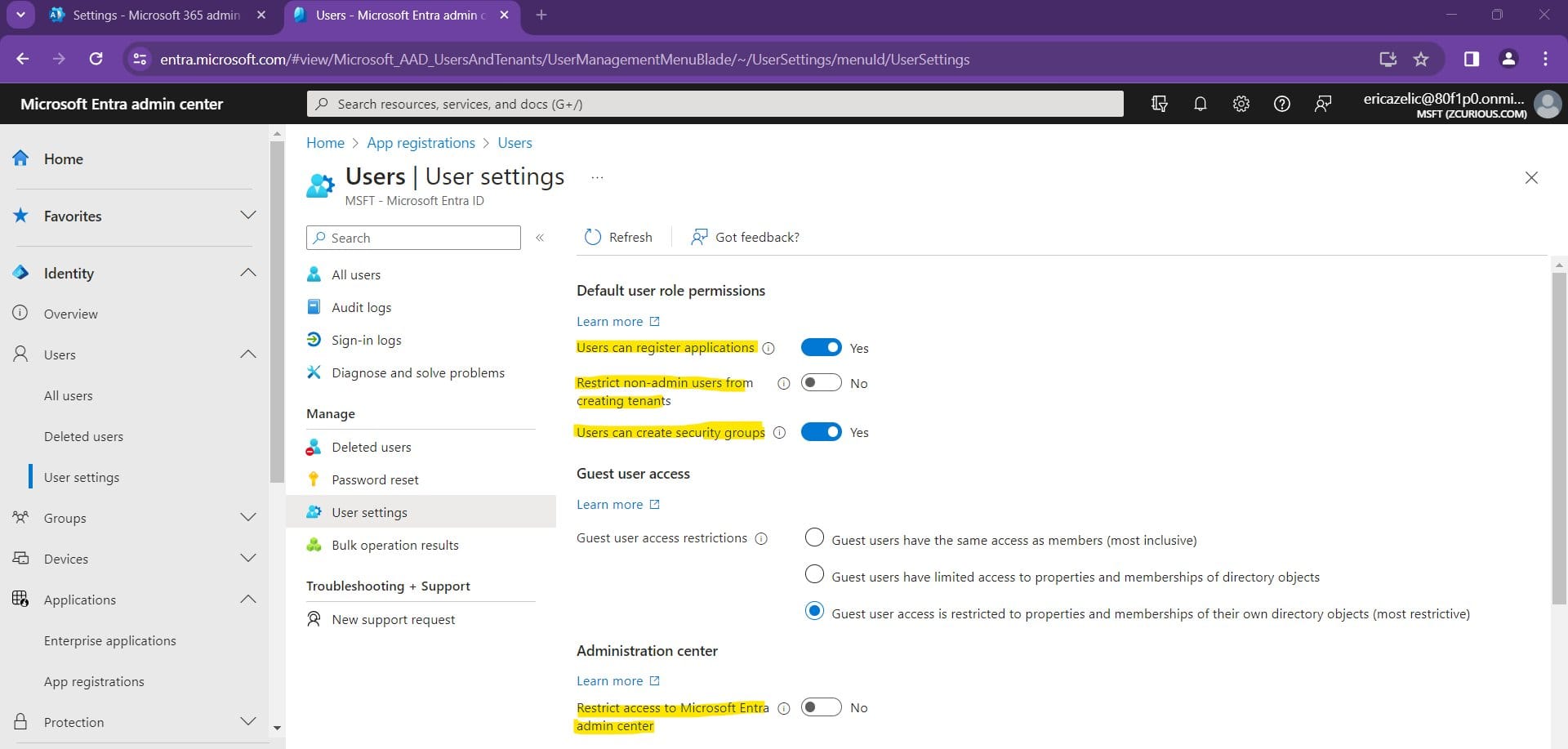

As one Blue Team security professional put it in a widely appreciated thread on X: "Some of the problems I see in this report, I SEE EVERYWHERE due to VULNERABLE DEFAULTS. Let's start with creating malicious OAuth applications. By default, ANY USER can create app registrations and consent to Graph permissions as well as sharing third-party company data," adding that "in tenants where this is hardened, ability to create app registrations require Application Administrator or Cloud-Application Administrator and admins must consent to permissions used by the application whether local or from another tenant."

(More on that below...)

They added: "FFS. ALL ADMINS SHOULD HAVE PHISHING RESISTANT MFA! Also, stop signing in from unmanaged devices with privileged accounts! You check private email or browse to a sight and get hacked, attacker gains access to your admin tokens and tenant! Use a dedicated secured device to access your MS Cloud as an admin..."

Microsoft security specialist, Andy Robbins – co-creator of Bloodhound, an application used to map Active Directory attack paths and permissions – added to The Stack that it appeared from Microsoft’s security guidance in the post-mortem that the attackers had “used the "legacy test OAuth application" to "grant themselves" the full_access_as_app MS Graph app role. This role lets the application read all user inboxes without requiring the users to authenticate to the application itself…

He added in a DM on X: “The AppRoleAssignment.ReadWrite.All MS Graph app role *BYPASSES* the consent process. So if the "legacy application" had *just this one* MS Graph app role, it would be able to grant itself *full control* of the *entire Microsoft corporate tenant*.”

Robbins’s analysis, which he admits is speculative, deserves a read in its entirety and we have captured it in the breakout box immediately below.

Bloodhound creator Andy Robbins' view in full.

The lack of precise details is a bit frustrating, but the "facts" they shared, from what I can tell, outline the following timeline:

1. SVR achieved initial access into a "legacy, non-production test tenant" in late November 2023. They achieved this through a password guessing attack.

2. SVR used their initial access to "compromise" a "legacy test OAuth application". I interpret this to mean SVR gained the ability to add a credential to an existing app registration in the "test tenant". There are several ways to achieve this. For example, the initial user they compromised may have had the "Cloud Application Administrator" Entra ID role, which allows principals to add new credentials to all existing app registration and service principal objects in that tenant.

3. Microsoft states that the "legacy test OAuth application" had "elevated access to the Microsoft corporate environment" Let me pause here to say that this is the most critical and obscure part of the attack path. This statement could mean many different things. Let me finish the remaining timeline and come back to this point after providing more context.

4. SVR created additional OAuth applications in the Microsoft corporate tenant. Microsoft's statements don't attempt to explain *why* SVR did this. As a red teamer, if it were me executing this attack path, I would be performing this action in order to set up long-term persistence in the Microsoft corporate tenant. I interpret this step as a persistence procedure, not a privilege escalation procedure.

5. SVR created a new user account in the Microsoft corporate tenant. Again I see this as a persistence step, not privilege escalation.

6. SVR used this new user account to "grant consent in the Microsoft corporate environment to the actor controlled malicious OAuth applications". Again, persistence, but also this could be done to try and bring less attention to their initial access OAuth application when they perform their data exfiltration actions later.

7. SVR used the "legacy test OAuth application" to "grant themselves" the full_access_as_app MS Graph app role. This role lets the application read all user inboxes without requiring the users to authenticate to the application itself. Let me now go back to the critical part of this attack path which I numbered "3" above.

I interpret Microsoft's statements to mean that there was an existing application registration in the "legacy, non-production test tenant" that had been instantiated into the Microsoft corporate Entra ID tenant as a service principal (also known as an Enterprise Application). At some point in the past, an administrator of the corporate Entra ID tenant granted this service principal (and therefore its corresponding app registration in the "legacy" tenant) a very high amount of privilege.

Now, while SVR took the following actions in the Microsoft corporate tenant: - Created a user - Created new applications Those actions pale in comparison to the power expressed by this action: - Granting the full_access_as_app role This action requires extremely high amounts of privilege. There are only three Entra ID roles that have this power:

1. Global Administrator

2. Partner Tier2 Support (a hidden, "legacy" role)

3. Privileged Role Administrator

There are only two other app roles that have this power:

1. AppRoleAssignment.ReadWrite.All

2. http://RoleManagement.ReadWrite.Directory

Now, this is where we can start to make some educated conjecture about what the existing configuration was - in other words, what the critical path was that let SVR turn their initial access in the "test tenant" into being able to read all emails for all accounts in the Microsoft corporate tenant. There is one more thing to mention before moving on: the "full_access_as_app" role requires admin consent. This means only the most privileged principals can effectively grant this role.

From all of this information and context, I see two possibilities:

1. The "legacy application" from the "test tenant" had either the Global Administrator, Partner Tier2 Support, or Privileged Role Administrator Entra ID role in the Microsoft corporate tenant.

OR it had the http://RoleManagement.ReadWrite.Directory MS Graph app role in the Microsoft corporate tenant.

Any one of those roles would enable *all* of the follow-on actions SVR took, from creating users and apps, to granting the full_access_as_app role to an app.

OR 2. The "legacy application" from the "test tenant" had the AppRoleAssignment.ReadWrite.All MS Graph app role.

Remember how I said the full_access_as_app role requires admin consent? Well, the AppRoleAssignment.ReadWrite.All MS Graph app role *BYPASSES* the consent process. So if the "legacy application" had *just this one* MS Graph app role, it would be able to grant itself *full control* of the *entire Microsoft corporate tenant*.

In my opinion, it is more likely the "legacy application" had this AppRoleAssignment.ReadWrite.All MS Graph app role.

There is clearly work to be done on defaults in Azure Active Directory, or what Microsoft now calls EntraID after a 2023 rebrand.

As former Facebook CSO Stamos – who admittedly has some skin in the game in his role for Microsoft Security rival SentinelOne – noted on LinkedIn: "AzureAD is overly complex, and lacks a UX that allows for administrators to easily understand the web of security relationships and dependencies that attackers are becoming accustomed to exploiting. In many organizations, AzureAD is deployed in hybrid mode, which combines the vulnerability of cloud (external password sprays) and on-premise (NTLM, mimikatz) identity technologies in a combination that smart attackers utilize to bounce between domains, escalate privilege and establish persistence.

"Calling this a 'legacy' tenant is a dodge; this system was clearly configured to allow for production access as of a couple of weeks ago, and Microsoft has an obligation to secure their legacy products and tenants just as well as ones provisioned today. It's not clear what they mean by 'legacy', but whatever Microsoft's definition it is likely to be representative of how thousands of their customers are utilizing their products."

He added: "These sentences in the blog post deserve a nomination to the Cybersecurity Chutzpah Hall of Fame, as Microsoft recommends that potential victims of this attack against their cloud-hosted infrastructure:

- "Detect, investigate, and remediate identity-based attacks using solutions like Microsoft Entra ID Protection.

- Investigate compromised accounts using Microsoft Purview Audit (Premium).

- Enforce on-premises Microsoft Entra Password Protection for Microsoft Active Directory Domain Services.”

"Microsoft is using this announcement as an opportunity to upsell customers on their security products, which are apparently necessary to run their identity and collaboration products safely! This is morally indefensible, just as it would be for car companies to charge for seat belts or airplane manufacturers to charge for properly tightened bolts. It has become clear over the past few years that Microsoft’s addiction to security product revenue has seriously warped their product design decisions, where they hold back completely necessary functionality for the most expensive license packs or as add-on purchases."

Agree or disagree, it might be time to check those permissions, audit your applications in "EntraID" and do some hardening.